Posted by nickpierno

If you asked me, I’d tell you that proper tracking is the single most important element in your local business digital marketing stack. I’d also tell you that even if you didn’t ask, apparently.

A decent tracking setup allows you to answer the most important questions about your marketing efforts. What’s working and what isn’t?

Many digital marketing strategies today still focus on traffic. Lots of agencies/developers/marketers will slap an Analytics tracking code on your site and call it a day. For most local businesses, though, traffic isn’t all that meaningful of a metric. And in many cases (e.g. Adwords & Facebook), more traffic just means more spending, without any real relationship to results.

What you really need your tracking setup to tell you is how many leads (AKA conversions) you’re getting, and from where. It also needs to do so quickly and easily, without you having to log into multiple accounts to piece everything together.

If you’re spending money or energy on SEO, Adwords, Facebook, or any other kind of digital traffic stream and you’re not measuring how many leads you get from each source, stop what you’re doing right now and make setting up a solid tracking plan your next priority.

This guide is intended to fill you in on all the basic elements you’ll need to assemble a simple, yet flexible and robust tracking setup.

Google Analytics

Google Analytics is at the center of virtually every good web tracking setup. There are other supplemental ways to collect web analytics (like Heap, Hotjar, Facebook Pixels, etc), but Google Analytics is the free, powerful, and omnipresent tool that virtually every website should use. It will be the foundation of our approach in this guide.

Analytics setup tips

Analytics is super easy to set up. Create (or sign into) a Google account, add your Account and Property (website), and install the tracking code in your website’s template.

Whatever happens, don’t let your agency or developer set up your Analytics property on their own Account. Agencies and developers: STOP DOING THIS! Create a separate Google/Gmail account and let this be the “owner” of a new Analytics Account, then share permission with the agency/developer’s account, the client’s personal Google account, and so on.

The “All Website Data” view will be created by default for a new property. If you’re going to add filters or make any other advanced changes, be sure to create and use a separate View, keeping the default view clean and pure.

Also be sure to set the appropriate currency and time zone in the “View Settings.” If you ever use Adwords, using the wrong currency setting will result in a major disagreement between Adwords and Analytics.

Goals

Once your basic Analytics setup is in place, you should add some goals. This is where the magic happens. Ideally, every business objective your website can achieve should be represented as a goal conversion. Conversions can come in many forms, but here are some of the most common ones:

- Contact form submission

- Quote request form submission

- Phone call

- Text message

- Chat

- Appointment booking

- Newsletter signup

- E-commerce purchase

How you slice up your goals will vary with your needs, but I generally try to group similar “types” of conversions into a single goal. If I have several different contact forms on a site (like a quick contact form in the sidebar, and a heftier one on the contact page), I might group those as a single goal. You can always dig deeper to see the specific breakdown, but it’s nice to keep goals as neat and tidy as possible.

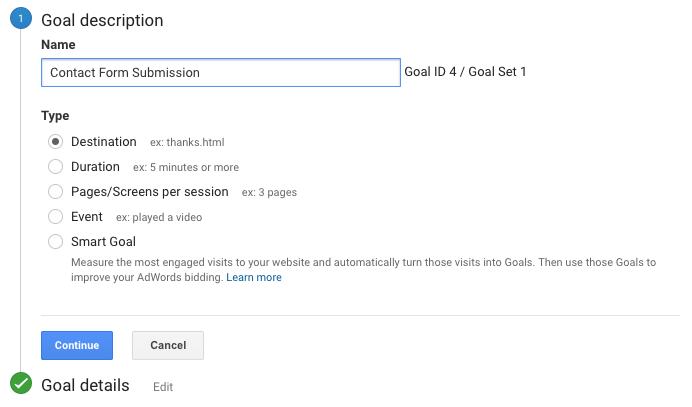

To create a goal in Analytics:

- Navigate to the Admin screen.

- Under the appropriate View, select Goals and then + New Goal.

- You can either choose between a goal Template, or Custom. Most goals are easiest to set up choosing Custom.

- Give your goal a name (ex. Contact Form Submission) and choose a type. Most goals for local businesses will either be a Destination or an Event.

Pro tip: Analytics allows you to associate a dollar value to your goal conversions. If you can tie your goals to their actual value, it can be a powerful metric to measure performance with. A common way to determine the value of a goal is to take the average value of a sale and multiply it by the average closing rate of Internet leads. For example, if your average sale is worth $1,000, and you typically close 1/10 of leads, your goal value would be $100.

Form tracking

The simplest way to track form fills is to have the form redirect to a “Thank You” page upon submission. This is usually my preferred setup; it’s easy to configure, and I can use the Thank You page to recommend other services, articles, etc. on the site and potentially keep the user around. I also find a dedicated Thank You page to provide the best affirmation that the form submission actually went through.

Different forms can all use the same Thank You page, and pass along variables in the URL to distinguish themselves from each other so you don’t have to create a hundred different Thank You pages to track different forms or goals. Most decent form plugins for WordPress are capable of this. My favorite is Gravityforms. Contact Form 7 and Ninja Forms are also very popular (and free).

Another option is using event tracking. Event tracking allows you to track the click of a button or link (the submit button, in the case of a web form). This would circumvent the need for a thank you page if you don’t want to (or can’t) send the user elsewhere when they submit a form. It’s also handy for other, more advanced forms of tracking.

Here’s a handy plugin for Gravityforms that makes setting up event tracking a snap.

Once you’ve got your form redirecting to a Thank You page or generating an event, you just need to create a goal in Analytics with the corresponding value.

You can use Thank You pages or events in a similar manner to track appointment booking, web chats, newsletter signups, etc.

Call tracking

Many businesses and marketers have adopted form tracking, since it’s easy and free. That’s great. But for most businesses, it leaves a huge volume of web conversions untracked.

If you’re spending cash to generate traffic to your site, you could be hemorrhaging budget if you’re not collecting and attributing the phone call conversions from your website.

There are several solutions and approaches to call tracking. I use and recommend CallRail, which also seems to have emerged as the darling of the digital marketing community over the past few years thanks to its ease of use, great support, fair pricing, and focus on integration. Another option (so I don’t come across as completely biased) is CallTrackingMetrics.

You’ll want to make sure your call tracking platform allows for integration with Google Analytics and offers something called “dynamic number insertion.”

Dynamic number insertion uses JavaScript to detect your actual local phone number on your website and replace it with a tracking number when a user loads your page.

Dynamic insertion is especially important in the context of local SEO, since it allows you to keep your real, local number on your site, and maintain NAP consistency with the rest of your business’s citations. Assuming it’s implemented properly, Google will still see your real number when it crawls your site, but users will get a tracked number.

Basically, magic.

There are a few ways to implement dynamic number insertion. For most businesses, one of these two approaches should fit the bill.

Number per source

With this approach, you’ll create a tracking number for each source you wish to track calls for. These sources might be:

- Organic search traffic

- Paid search traffic

- Facebook referral traffic

- Yelp referral traffic

- Direct traffic

- Vanity URL traffic (for visitors coming from an offline TV or radio ad, for example)

When someone arrives at your website from one of these predefined sources, the corresponding number will show in place of your real number, wherever it’s visible. If someone calls that number, an event will be passed to Analytics along with the source.

This approach isn’t perfect, but it’s a solid solution if your site gets large amounts of traffic (5k+ visits/day) and you want to keep call tracking costs low. It will do a solid job of answering the basic questions of how many calls your site generates and where they came from, but it comes with a few minor caveats:

- Calls originating from sources you didn’t predefine will be missed.

- Events sent to Analytics will create artificial sessions not tied to actual user sessions.

- Call conversions coming from Adwords clicks won’t be attached to campaigns, ad groups, or keywords.

Some of these issues have more advanced workarounds. None of them are deal breakers… but you can avoid them completely with number pools — the awesomest call tracking method.

Number pools

“Keyword Pools,” as CallRail refers to them, are the killer app for call tracking. As long as your traffic doesn’t make this option prohibitively expensive (which won’t be a problem for most local business websites), this is the way to go.

In this approach, you create a pool with several numbers (8+ with CallRail). Each concurrent visitor on your site is assigned a different number, and if they call it, the conversion is attached to their session in Analytics, as well as their click in Adwords (if applicable). No more artificial sessions or disconnected conversions, and as long as you have enough numbers in your pool to cover your site’s traffic, you’ll capture all calls from your site, regardless of source. It’s also much quicker to set up than a number per source, and will even make you more attractive and better at sports!

You generally have to pay your call tracking provider for additional numbers, and you’ll need a number for each concurrent visitor to keep things running smoothly, so this is where massive amounts of traffic can start to get expensive. CallRail recommends you look at your average hourly traffic during peak times and include ¼ the tally as numbers in your pool. So if you have 30 visitors per hour on average, you might want ~8 numbers.

Implementation

Once you’ve got your call tracking platform configured, you’ll need to implement some code on your site to allow the dynamic number insertion to work its magic. Most platforms will provide you with a code snippet and instructions for installation. If you use CallRail and WordPress, there’s a handy plugin to make things even simpler. Just install, connect, and go.

To get your calls recorded in Analytics, you’ll just need to enable that option from your call tracking service. With CallRail you simply enable the integration, add your domain, and calls will be sent to your Analytics account as Events. Just like with your form submissions, you can add these events as a goal. Usually it makes sense to add a single goal called “Phone Calls” and set your event conditions according to the output from your call tracking service. If you’re using CallRail, it will look like this:

Google Search Console

It’s easy to forget to set up Search Console (formerly Webmaster Tools), because most of the time it plays a backseat role in your digital marketing measurement. But miss it, and you’ll forego some fundamental technical SEO basics (country setting, XML sitemaps, robots.txt verification, crawl reports, etc.), and you’ll miss out on some handy keyword click data in the Search Analytics section. Search Console data can also be indispensable for diagnosing penalties and other problems down the road, should they ever pop up.

Make sure to connect your Search Console with your Analytics property, as well as your Adwords account.

With all the basics of your tracking setup in place, the next step is to bring your paid advertising data into the mix.

Google Adwords

Adwords is probably the single most convincing reason to get proper tracking in place. Without it, you can spend a lot of money on clicks without really knowing what you get out of it. Conversion data in Adwords is also absolutely critical in making informed optimizations to your campaign settings, ad text, keywords, and so on.

If you’d like some more of my rantings on conversions in Adwords and some other ways to get more out of your campaigns, check out this recent article 🙂

Getting your data flowing in all the right directions is simple, but often overlooked.

Linking with Analytics

First, make sure your Adwords and Analytics accounts are linked. Always make sure you have auto-tagging enabled on your Adwords account. Now all your Adwords data will show up in the Acquisition > Adwords area of Analytics. This is a good time to double-check that you have the currency correctly set in Analytics (Admin > View Settings); otherwise, your Adwords spend will be converted to the currency set in Analytics and record the wrong dollar values (and you can’t change data that’s already been imported).

Next, you’ll want to get those call and form conversions from Analytics into Adwords.

Importing conversions in Adwords

Some Adwords management companies/consultants might disagree, but I strongly advocate an Analytics-first approach to conversion tracking. You can get call and form conversions pulled directly into Adwords by installing a tracking code on your site. But don’t.

Instead, make sure all your conversions are set up as goals in Analytics, and then import them into Adwords. This allows Analytics to act as your one-stop-shop for reviewing your conversion data, while providing all the same access to that data inside Adwords.

Call extensions & call-only ads

This can throw some folks off. You will want to track call extensions natively within Adwords. These conversions are set up automatically when you create a call extension in Adwords and elect to use a Google call forwarding number with the default settings.

Don’t worry though, you can still get these conversions tracked in Analytics if you want to (I could make an argument either for or against). Simply create a single “offline” tracking number in your call tracking platform, and use that number as the destination for the Google forwarding number.

This also helps counteract one of the oddities of Google’s call forwarding system. Google will actually only start showing the forwarding number on desktop ads after they have received a certain (seemingly arbitrary) minimum number of clicks per week. As a result, some calls are tracked and some aren’t — especially on smaller campaigns. With this little trick, Analytics will show all the calls originating from your ads — not just ones that take place once you’ve paid Google enough each week.

Adwords might give you a hard time for using a number in your call extensions that isn’t on your website. If you encounter issues with getting your number verified for use as a call extension, just make sure you have linked your Search Console to your Adwords account (as indicated above).

Now you’ve got Analytics and Adwords all synced up, and your tracking regimen is looking pretty gnarly! There are a few other cool tools you can use to take full advantage of your sweet setup.

Google Tag Manager

If you’re finding yourself putting a lot of code snippets on your site (web chat, Analytics, call tracking, Adwords, Facebook Pixels, etc), Google Tag Manager is a fantastic tool for managing them all from one spot. You can also do all sorts of advanced slicing and dicing.

GTM is basically a container that you put all your snippets in, and then you put a single GTM snippet on your site. Once installed, you never need to go back to your site’s code to make changes to your snippets. You can manage them all from the GTM interface in a user-friendly, version-controlled environment.

Don’t bother if you just need Analytics on your site (and are using the CallRail plugin). But for more robust needs, it’s well worth considering for its sheer power and simplicity.

Here’s a great primer on making use of Google Tag Manager.

UTM tracking URLs & Google Campaign URL Builder

Once you’ve got conversion data occupying all your waking thoughts, you might want to take things a step further. Perhaps you want to track traffic and leads that come from an offline advertisement, a business card, an email signature, etc. You can build tracking URLs that include UTM parameters (campaign, source, and medium), so that when visitors come to your site from a certain place, you can tell where that place was!

Once you know how to build these URLs, you don’t really need a tool, but Google’s Campaign URL Builder makes quick enough work of it that it’s bound to earn a spot in your browser’s bookmarks bar.

Pro tip: Use a tracking URL on your Google My Business listing to help distinguish traffic/conversions coming in from your listing vs traffic coming in from the organic search results. I’d recommend using:

Source: google

Medium: organic

Campaign name: gmb-listing (or something)

This way your GMB traffic still shows up in Analytics as normal organic traffic, but you can drill down to the gmb-listing campaign to see its specific performance.

Bonus pro tip: Use a vanity domain or a short URL on print materials or offline ads, and point it to a tracking URL to measure their performance in Analytics.

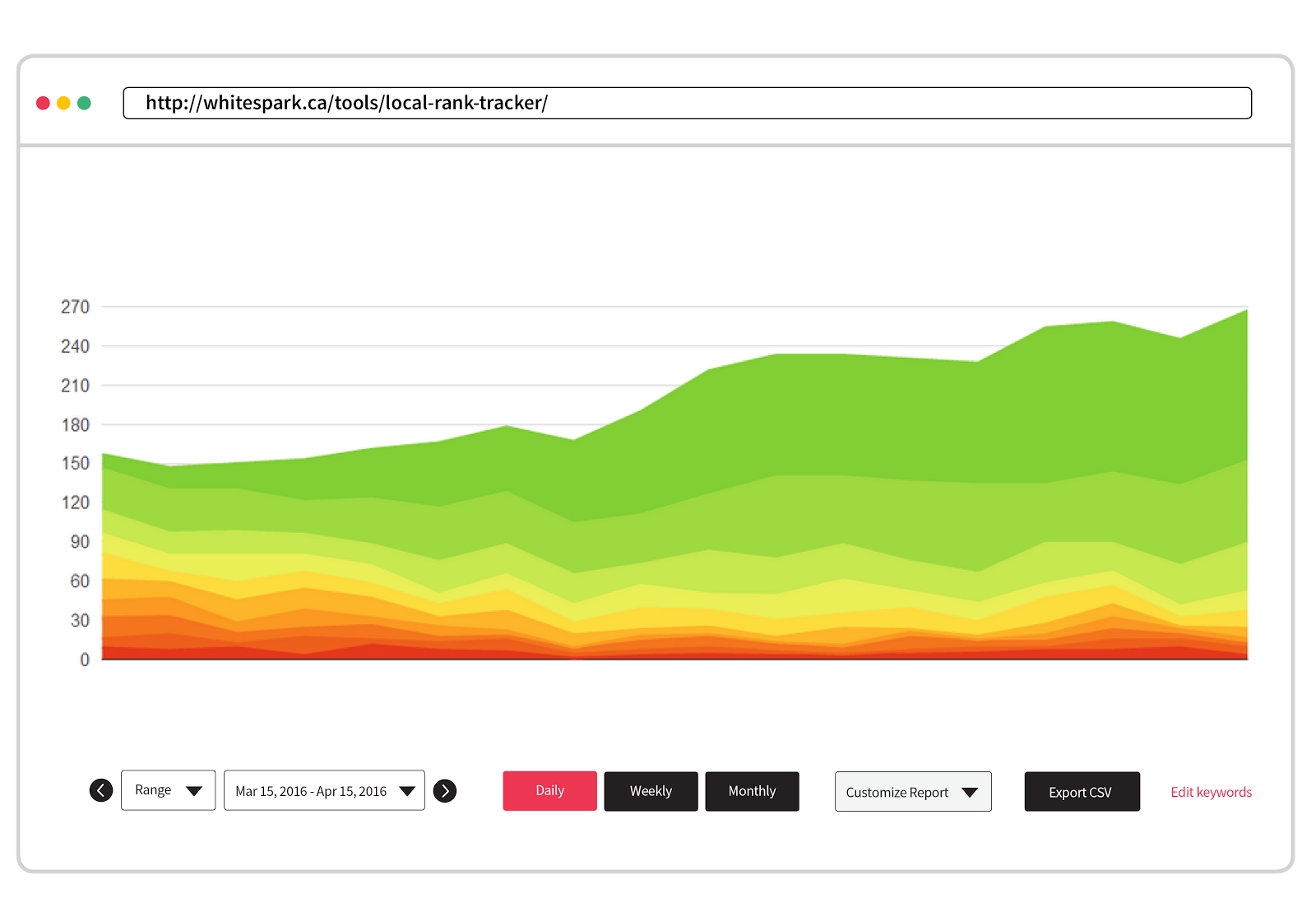

Rank tracking

Whaaat? Rank tracking is a dirty word to conversion tracking purists, isn’t it?

Nah. It’s true that rank tracking is a poor primary metric for your digital marketing efforts, but it can be very helpful as a supplemental metric and for helping to diagnose changes in traffic, as Darren Shaw explored here.

For local businesses, we think our Local Rank Tracker is a pretty darn good tool for the job.

Google My Business Insights

Your GMB listing is a foundational piece of your local SEO infrastructure, and GMB Insights offer some meaningful data (impressions and clicks for your listing, mostly). It also tries to tell you how many calls your listing generates for you, but it comes up a bit short since it relies on “tel:” links instead of tracking numbers. It will tell you how many people clicked on your phone number, but not how many actually made the call. It also won’t give you any insights into calls coming from desktop users.

There’s a great workaround though! It just might freak you out a bit…

Fire up your call tracking platform once more, create an “offline” number, and use it as your “primary number” on your GMB listing. Don’t panic. You can preserve your NAP consistency by demoting your real local number to an “additional number” slot on your GMB listing.

I don’t consider this a necessary step, because you’re probably not pointing your paid clicks to your GMB listing. However, combined with a tracking URL pointing to your website, you can now fully measure the performance of Google My Business for your business!

Disclaimer: I believe that this method is totally safe, and I’m using it myself in several instances, but I can’t say with absolute certainty that it won’t impact your rankings. Whitespark is currently testing this out on a larger scale, and we’ll share our findings once they’re assembled!

Taking it all in

So now you’ve assembled a lean, mean tracking machine. You’re already feeling 10 years younger, and everyone pays attention when you enter the room. But what can you do with all this power?

Here are a few ways I like to soak up this beautiful data.

Pop into Analytics

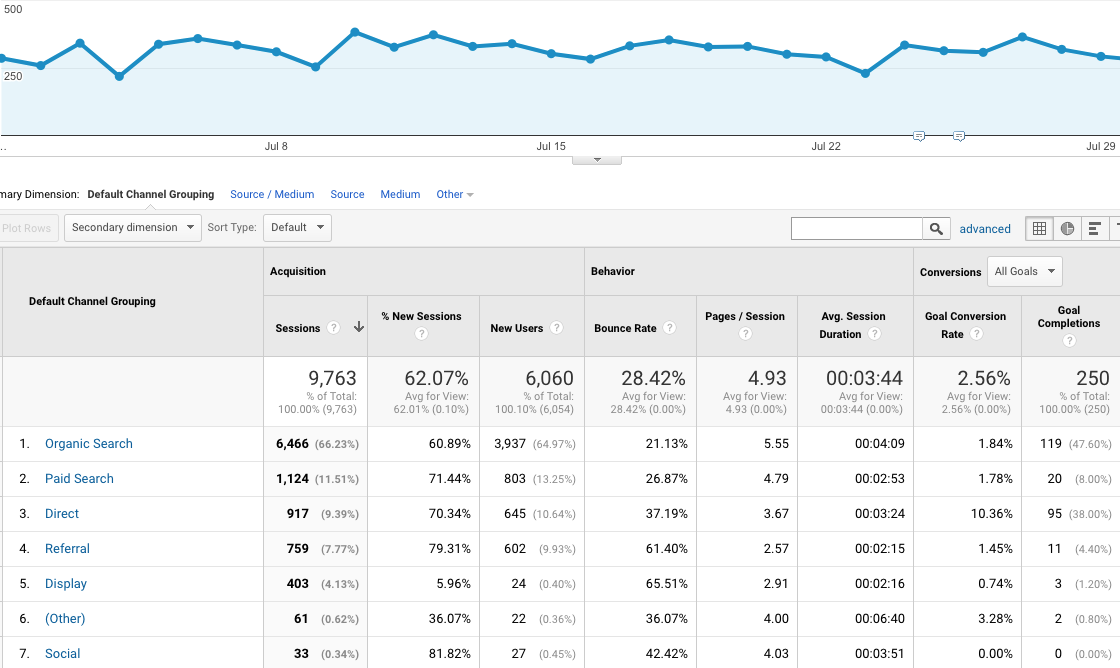

Since we’ve centralized all our tracking in Analytics, we can answer pretty much any performance questions we have within a few simple clicks.

- How many calls and form fills did we get last month from our organic rankings?

- How does that compare to the month before? Last year?

- How many paid conversions are we getting? How much are we paying on average for them?

- Are we doing anything expensive that isn’t generating many leads?

- Does our Facebook page generate any leads on our website?

There are a billion and seven ways to look at your Analytics data, but I do most of my ogling from Acquisition > All Traffic > Channels. Here you get a great overview of your traffic and conversions sliced up by channels (Organic Search, Paid Search, Direct, Referral, etc). You can obviously adjust date ranges, compare to past date ranges, and view conversion metrics individually or as a whole. For me, this is Analytics home base.

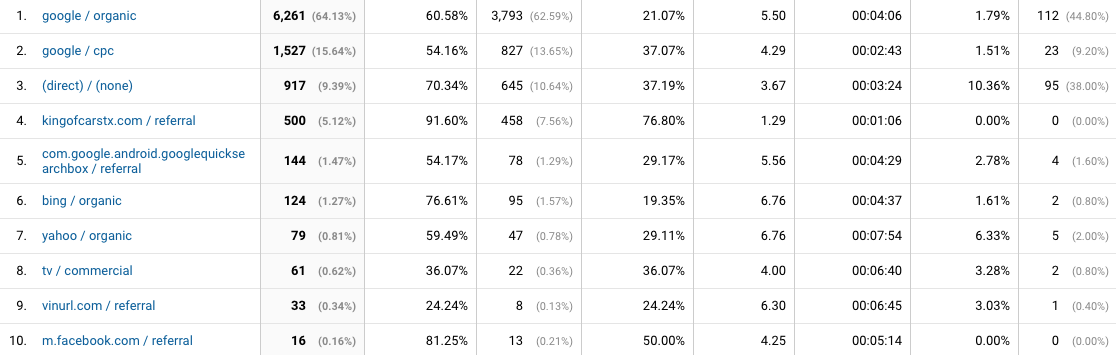

Acquisition > All Traffic > Source/Medium can be equally interesting, especially if you’ve made good use of tracking URLs.

Make some sweet SEO reports

I can populate almost my entire standard SEO client report from the Acquisition section of Analytics. Making conversions the star of the show really helps to keep clients engaged in their monthly reporting.

Google Analytics dashboards

Google’s Dashboards inside Analytics provide a great way to put the most important metrics together on a single screen. They’re easy to use, but I’ve always found them a bit limiting. Fortunately for data junkies, Google has recently released its next generation data visualization product…

Google Data Studio

This is pretty awesome. It’s very flexible, powerful, and user-friendly. I’d recommend skipping the Analytics Dashboards and going straight to Data Studio.

It will allow to you to beautifully dashboard-ify your data from Analytics, Adwords, Youtube, DoubleClick, and even custom databases or spreadsheets. All the data is “live” and dynamic. Users can even change data sources and date ranges on the fly! Bosses love it, clients love it, and marketers love it… provided everything is performing really well 😉

Supermetrics

If you want to get really fancy, and build your own fully custom dashboard, develop some truly bespoke analysis tools, or automate your reporting regimen, check out Supermetrics. It allows you to pull data from just about any source into Google Sheets or Excel. From there, your only limitation is your mastery of spreadsheet-fu and your imagination.

TL;DR

So that’s a lot of stuff. If you’d like to skip the more nuanced explanations, pro tips, and bad jokes, here’s the gist in point form:

- Tracking your digital marketing is super important.

- Don’t just track traffic. Tracking conversions is critical.

- Use Google Analytics. Don’t let your agency use their own account.

- Set up goals for every type of lead (forms, calls, chats, bookings, etc).

- Track forms with destinations (thank you pages) or events.

- Track your calls, probably using CallRail.

- Use “number per source” if you have a huge volume of traffic; otherwise, use number pools (AKA keyword pools). Pools are better.

- Set up Search Console and link it to your Analytics and Adwords accounts.

- Link Adwords with Analytics.

- Import Analytics conversions into Adwords instead of using Adwords’ native conversion tracking snippet…

- …except for call extensions. Track those within and Adwords AND in Analytics (if you want to) by using an “offline” tracking number as the destination for your Google forwarding numbers.

- Use Google Tag Manager if you have more than a couple third-party scripts to run on your site (web chat, Analytics, call tracking, Facebook Pixels etc).

- Use Google Campaign URL Builder to create tracked URLs for tracking visitors from various sources like offline advertising, email signatures, etc.

- Use a tracked URL on your GMB listing.

- Use a tracked number as your “primary” GMB listing number (if you do this, make sure you put your real local number as a “secondary” number). Note: We think this is safe, but we don’t have quite enough data to say so unequivocally. YMMV.

- Use vanity domains or short URLs that point to your tracking URLs to put on print materials, TV spots, etc.

- Track your rankings like a boss.

- Acquisition > All Traffic > Channels is your new Analytics home base.

- Consider making some Google Analytics Dashboards… and then don’t, because Google Data Studio is way better. So use that.

- Check out Supermetrics if you want to get really hardcore.

- Don’t let your dreams be dreams.

If you’re new to tracking your digital marketing, I hope this provides a helpful starting point, and helps cut through some of the confusion and uncertainty about how to best get set up.

If you’re a conversion veteran, I hope there are a few new or alternative ideas here that you can use to improve your setup.

If you’ve got anything to add, correct, or ask, leave a comment!

Sign up for The Moz Top 10, a semimonthly mailer updating you on the top ten hottest pieces of SEO news, tips, and rad links uncovered by the Moz team. Think of it as your exclusive digest of stuff you don’t have time to hunt down but want to read!

So the first thing I want to start with is expanding a little bit beyond just your average image ad. A lot of social networks, especially Facebook, are promoting video heavily nowadays. You can use that to get a lot cheaper engagement than you can from a typical image ad. If you’ve logged in to your Facebook feed lately, you’ve probably noticed that aside from birth announcements, there’s a lot of videos filling up the feed. So as an advertiser, if you want to blend in well with that, using video as a teaser or a sampler for the content that you’re producing is a great way to kind of look natural and look like you belong in the user’s feed.